The Rotten Tomatoes of Everything: AI and the Pursuit of Objective Reality

I don’t drink much these days. I reserve alcohol for awkward social interactions and meaningful moments with people close to me. One of those moments was last October.

I was in Austin, Texas, with a friend for the Formula 1 race. It was the first time I had seen a race in person. We started our days with Piña Coladas and ended them with the finest gin and tonics on 6th Street.

Around midnight on Saturday, we began the philosophical debate phase of the weekend. It’s that time when you and your tipsy friend transform into Plato and Aristotle, eager to solve the mysteries of the universe.

Our topic that evening was “Why do movies suck?” My friend thinks the 1980s were the peak of movie-making. He points to classics like “Three Amigos!” and “Coming to America” as irrefutable evidence. Further, he claims Rotten Tomatoes is responsible for the precipitous decline of the movie industry.

Let’s ignore the fact that Rotten Tomatoes wasn’t introduced until 1998. Let’s also set aside any debate over whether the 1980s were the peak of movie making (obviously, it was). Is Rotten Tomatoes responsible for the endless supply of superhero movies and unimaginative sequels?

My friend’s theory is that reducing movie ratings to a single number strips away everything that makes movies great. Instead of producing films that some people love and others hate, studios produce movies most people think are okay.

I don’t remember much about that weekend (I blame the day drinking), but the movie conversation sticks with me. We’re watching a similar phenomenon play out with AI. ChatGPT is a beta version of Rotten Tomatoes for all human knowledge.

How did we get here? Where are we going? Are we destined for a future of shitty sequels? I’m optimistic, but buckle up for a story filled with twists, turns, and special effects that won’t age well.

It’s a (not so) Small World

We don’t experience objective reality. Everything we see, hear, touch, smell, and feel is an abstraction. We’re living in The Matrix, and Morpheus hasn’t shown up with the red pill (proof there were good movies after the 80s).

In his book, The Case Against Reality: Why Evolution Hid the Truth from Our Eyes, Donald Hoffman argues that evolution leads to an understanding of reality optimized for fitness (survival) rather than truth. He equates our senses to the graphical user interface on a computer. When you drag a document into the trash can on your desktop, there’s no elf inside crumpling a piece of paper. The user interface is an abstraction.

One way to wrap your head around the concept of objective reality is to consider the electromagnetic spectrum. Humans don’t perceive Wi-Fi signals or X-rays, but we know they exist. Those wavelengths are part of objective reality but not the reality we experience.

Visible Wavelengths on the Electromagnetic Spectrum (Source: @barelymaps)

Human progress is fueled by the pursuit of objective reality. We conduct research, study microorganisms, and launch rockets to learn about the world around us. Along the way, we apply what we learn to build a safer, more prosperous, and enriching society. That's the goal, anyway.

I realize this is a long article, so here's the TL;DR - Exploring objective reality generates complexity. AI is our latest solution to the complexity problem. We may be on the cusp of an Age of Discovery, but we're also pulling ourselves further away from the reality we know and love.

I’ll use “reality” as shorthand for “objective reality” in this article for brevity. I hope that doesn’t offend any theoretical physicists or evolutionary biologists.

Boundless Complexity

If exploration of reality is the engine of human progress, complexity is what comes out of the tailpipe. Mentally transport yourself back to the early 1900s. What would life be like without computers, fast fashion, or automobiles? Now, go back to the early 1800s. Now try the 1500s.

With each passing year, humans have more to learn, decisions to make, and options to explore. Our monkey brains don’t evolve as quickly as our understanding of reality. We do our best to cope, but most of us can’t escape the feeling that life is becoming more complicated.

Reductionist thinking is how we cope with complexity. That’s fine when it involves diligently constructed mental models. It’s less fine when we ignore complexity entirely. Here are a few examples:

Classifying people as “good” or “bad” because we don’t have enough time or information to make sense of nuanced beliefs and behaviors

Unilaterally relying on dogma or experts to make decisions when others’ interests, incentives, and context don’t align with our own

Embracing conspiracy theories because simple stories are easier to digest than the messy reality of unintended consequences

If those sound familiar, welcome to being a human in 2024. Our brains are incredible pattern recognition engines, but we can only store so much information in 86 billion neurons. Eventually, we have to take a few shortcuts.

Overwhelming complexity can’t be solved by regulating social media, improving education, or returning to simpler times. Those actions may provide temporary relief but won’t solve the underlying problem. We live in an increasingly complex world and are reaching the limits of what our monkey brains can process.

Where does that leave us? Before speculating where we’re headed, let’s examine what we can learn from the past.

The Past: From One to Many

Welcome to the part of the article where I distill human progress into five simple steps. This isn't a history lesson. It's my attempt to situate AI in a broader historical context.

Each step in the journey follows a similar storyline: (1) humans discover a new way to explore reality, (2) exploration generates complexity, (3) we find a way to deal with the complexity, and (4) our solution fuels more exploration. Rinse and repeat.

Let’s start at the beginning. I mean the very beginning. Imagine the first humans on Earth. How would they experience reality?

A direct but narrow view of reality (the pink thing is the person’s brain)

It would probably be like walking alone in the woods. They'd see trees, hear birds, smell damp leaves, and feel the earth beneath their feet. The first humans had a direct but narrow view of reality. That's what I'm illustrating in the first diagram.

Now, let’s fast forward several thousand years to the period when oral and written language were commonplace.

A broader view of reality due to shared experiences across time and space

This would be like walking in the woods with a friend. Imagine you’re feeling peckish and reach for some berries. Your friend grabs your hand and lectures you about eating random food you find in the forest. Much of what we learn in life is through the experience of others.

Language allows us to share our understanding of reality with others across space and time. Humans exploring another part of the planet can tell us what they found. Dead people can share ideas with living people. With language, we explore reality as a group rather than as individuals.

Oral and written language creates multiple versions of reality. Who you know and trust influences your worldview. Left unchecked, large social networks can lead to divergent views of reality. There’s no guarantee truth prevails.

Science is the solution to the problem I’m describing. By systematically studying the world, humans with different life experiences can converge on a shared understanding of reality.

More recently, science has revealed parts of reality hidden from biological senses. You can squint at a leaf all day, but the cellular structure is too small to see. Instruments like microscopes allow us to test hypotheses that can’t be validated with our senses.

Supercharging human senses (the pink box is a microscope)

One thing to note before moving on is that instruments represent the first break from direct observation. Viewing a leaf through a microscope differs from looking at it with the naked eye. This creates multiple mental models for a leaf — and more complexity.

Now we have a problem. We can’t expect every human to understand every scientific discovery. Most people only need to know that leaves grow on trees. However, at least a few humans should probably understand what a leaf looks like under a microscope.

So begins the era of specialization. If the below illustration looks like an organization chart, there’s a reason. Hierarchies are how humans have managed complexity for the past thousand years.

The great pyramids of humanity

The people at the bottom of the hierarchy have a narrow view of reality. Sometimes, it’s a direct view, but often it’s indirect. Your doctor listens to your heart using a stethoscope rather than an ear (if this isn’t the case, you should find a new doctor).

At the top of the hierarchy are people with the broadest view of reality. They’re in a position to make the best decisions for the group but often have little idea what’s happening at the coalface.

In the middle are my favorite people. They don’t make direct observations or critical decisions. Instead, they act as conduits, aggregating insights from below and disaggregating decisions from above.

Hierarchy is an elegant solution to complexity for a few reasons. First, it’s scalable. As complexity increases, you can add more layers. Second, it’s standardized. If a person works in one hierarchy, they can often figure out how to work in a different one. Lastly, it’s divisible. You can squish hierarchies together to create larger ones or break them apart to create smaller ones.

Unfortunately, there’s a limit to how much complexity hierarchies can manage. You can’t keep adding layers to a hierarchy (this hasn’t stopped governments from trying). Coordination also becomes a new source of complexity.

Enter AI. Narrow artificial intelligence has been with us for decades. The first general-purpose computer was introduced in 1946, and we’ve been offloading work to machines ever since.

I realize this isn’t the conventional definition of narrow AI. I think about automation and AI differently than most people. If you want a more nuanced perspective, you’re in luck. I wrote the book Artificially Human: Making Sense of Automation and AI. It’s a bestseller among my family, friends, and people who enjoy arguing with me.

Our growing digital workforce (friendly robot boxes to make them less terrifying)

Historically, machines have worked at the base of the hierarchy. They diligently generate invoices and recommend Danish crime dramas to keep you glued to your television.

Each time we approach the limit of hierarchies, our digital buddies bail us out. There was a time when one in 13 working women in the U.S. was a switchboard operator. Today, humans make nearly five trillion phone calls yearly, and hardly any switchboard operators are required.

Narrow AI allows us to do more with less. It also places further levels of abstraction between humans and reality.

That’s it for the past. Let's move on to what's happening today.

The Present: Artificial Brains

Narrow AI can work 24 hours a day, 7 days a week. They produce more data about the world than we can absorb. They’re also a nightmare to maintain. We’re constantly scrambling to keep our digital friends working reliably and efficiently.

What if we could build powerful machines to make sense of the complexity generated by narrow AI? Maybe the new machines could even maintain the simple machines for us.

That may sound absurd, but it’s what we’re doing. The race is on to build artificial general intelligence (AGI). Companies are investing billions of dollars in AI models that reason, communicate, and experience the world like humans.

Foundation models from companies like OpenAI, Anthropic, and Google operate at a different level of complexity than narrow AI. They use neural network architectures based on the brain. Humans can’t codify rules about reality quickly, accurately, and thoroughly enough for these new models, so we hand over raw data and let the machines find the patterns.

Early results are promising. Generative AI models are coaching recent graduates and fixing computer code. You may look at ChatGPT and think it’ll never operate autonomously. Maybe you’re right, but ChatGPT was released on November 30, 2022. We’re closer to the beginning of this phase than the end.

Machines managing machines

I don’t want to speculate on when AGI will arrive. It’s a silly debate when we don’t understand how human brains work. That said, we’re probably talking decades, not centuries. Does it matter if humans invent AGI in 2025, 2030, 2050, or 2100? Any of those horizons are a blink of an eye in the broader context of this story.

AGI is coming. The question is what it means for human progress and what new forms of complexity it’ll spawn.

The Future: Age of Discovery

Anybody claiming to know the future of AI is delusional. Early humans couldn’t have predicted the impact of language. Somebody living in the early 1900s couldn’t have predicted the impact of computers. There’s a difference between speculation and prediction. Please treat everything from this point forward as the former.

Two thoughts come to mind when I look at the “Race for AGI” illustration. First, there’s little reason for humans and machines to work in hierarchies once we have AGI. Hierarchies enable human specialization, not AI.

Second, AGI makes it possible to expand horizontally without expanding vertically. Humanity can cast a wider net in its exploration of reality. We need not worry about running out of humans or hierarchies collapsing under the weight of their complexity.

Progress without the hierarchies

Imagine how much more scientific research, product development, and ambitious exploration we could pursue without needing hierarchies. This doesn’t mean hierarchies will go away; specialization still has value. My point is that future pursuits won’t necessarily require armies of humans and complex hierarchies like past pursuits.

In Artificially Human, I ask, “What would living in a world with 4 billion human workers and 100 billion digital workers mean?” My working hypothesis is that this would kickstart an Age of Discovery, during which our understanding of reality expands at a breakneck pace.

There are signs we’re headed in that direction. Think about how AlphaFold accelerated human understanding of the protein folding problem. Heck, check out my website, Paper-to-Podcast. I’ve used AI to analyze nearly 900 research papers and produce 250 podcast episodes in the past year.

This transition won’t happen overnight. The creation and adoption of AGI will be slower than experts claim but faster than most people expect. In the meantime, prepare for the Rotten Tomatoes of everything.

Abstracting Abstractions

At the beginning of this article, I claimed ChatGPT is the beta version of Rotten Tomatoes for all human knowledge. What does that mean?

The digital world is an abstraction of reality. Online articles and comments are interpretations of the authors’ experiences. Instagram is a curated album of user experiences. YouTube is where people share engineered snippets of reality.

Foundation models are not trained on objective reality. They’re trained on abstractions created by humans. Similarly to how Rotten Tomatoes adds a layer of abstraction between its users and critic reviews (abstractions themselves), generative AI adds a layer of abstraction between humans and the digital world.

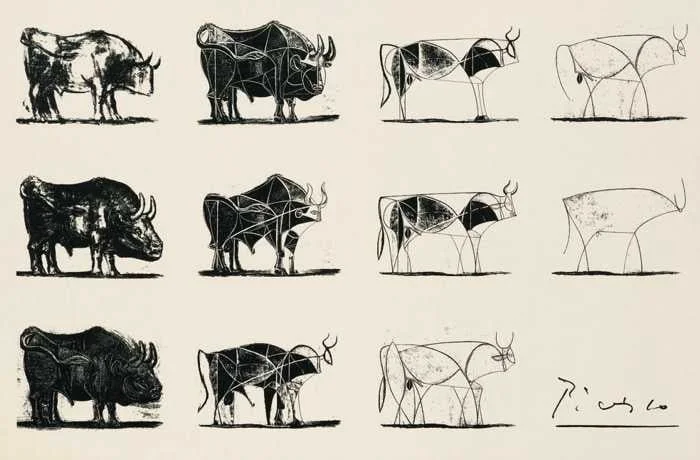

Why does this matter? It’s because information is lost with each level of abstraction. ChatGPT’s view of reality is vast but incomplete. It’s difficult to imagine a path to AGI that only involves abstractions. It would be like learning about the world from Pablo Picasso’s art.

Pablo Picasso’s “The Bull” series, which illustrates his abstraction process (1945)

Perhaps that’s one reason early studies show generative AI helping novice and low-skilled people most. If your mental models for a domain are poor, you can access better ones with generative AI. If your mental models are solid, you may be underwhelmed by what generative AI offers.

We’ve seen this story before with AI. There was limited training data in the early days of image recognition algorithms. Humans hadn’t labeled many images of the world. As a result, neural networks tended to underperform traditional architectures like support vector machines (SVMs).

In 2009, Dr. Fei-Fei Li and her team introduced ImageNet, a database of 14 million labeled images across over 20,000 categories. Five years later, artificial neural networks surpassed humans in recognition accuracy. The ImageNet database isn’t reality, but it’s less of an abstraction than the databases that came before it.

AI recognizing images better than humans

I’m unsure how many more patterns AI can learn from what humans have codified in the digital world. The models keep improving, especially in domains where the digital world is roughly the same as reality (e.g., writing computer code).

I suspect we’ll see diminishing returns in domains where the digital world is an imperfect representation of reality. Even simple capabilities like temporal reasoning (understanding the concept of time) may be challenging to learn without direct exposure to the real world.

Existing AI foundation models produce abstractions of abstractions. They’re portals into a digital world humans created. All we’ve done so far is invent the Rotten Tomatoes of all human knowledge. The Age of Discovery requires one more step: directly connecting AI with reality.

The Small and the Big

Current AGI training methods will produce plenty of sequels but few originals. Most human progress doesn’t come from rehashing known patterns. It comes from discovering new patterns.

Where are we likely to find those new patterns? Probably not in the slice of reality we’ve explored for thousands of years. More than eight billion humans scour that segment every day.

In modern times, most discoveries have originated from the small and the big. Let’s begin with the small.

Each time we dig into a domain, we discover new insights that shape our understanding of reality. Digging into materials, we discovered atoms. Digging into atoms, we discovered particles. Digging into particles, we discovered quarks. Reality is the ultimate nesting doll.

When we dig, we create complexity. We can’t simply move on to the next layer. The discovery of quarks didn’t cause us to abandon material science, chemistry, and particle physics. There’s value in each layer.

Imagine AGI capable of autonomously generating hypotheses, running experiments, and engineering products. For example, research labs have begun collecting high-quality, longitudinal gut microbiome data. It could take decades for human scientists to explore the resulting datasets fully. How much faster could AGI find the needles in that haystack?

At the other end of the spectrum is “the big.” In these domains, stepping back is more likely to improve our understanding. Thousands of humans have researched the brain, economy, and universe. In each case, a deeper understanding of the parts doesn’t seem to translate into a robust understanding of the whole.

Consolidating discoveries with AGI could highlight larger patterns we’re missing. The “hard problem of consciousness,” the Fermi paradox, and other seemingly intractable problems may be solvable by AI capable of seeing the bigger picture.

I’m excited by the prospect of an AI-powered Age of Discovery. Imagine how much we could learn about the universe, our planet, and ourselves.

That said, I’m not ruling out the possibility this all goes horribly wrong. The Terminator is a fantastic 80’s movie unless you watch it as a documentary.

If AGI does kick off an Age of Discovery, one thing is clear: Life is about to get more complex.

Models All the Way Down

Imagine trying to explain your daily routine to a chimpanzee. I mash on this keyboard all day, which makes the number in my bank account go up. I then go to a building down the street where humans keep all the food. They won’t let me have any unless I give them this piece of plastic. When I come home, the number in my bank account has gone back down, which means it’s time to mash on the keyboard again.

Even if the chimpanzee could mimic the actions I described, he’d have no idea what he was doing or why it worked. His brain can’t store enough patterns to understand the complexity of my life. In the era of AGI, we’re the chimps.

Hierarchies and narrow AI create levels of abstraction, but humans can still figure out what’s happening. A CEO can spend time with a frontline employee taking customer service calls. A programmer can add lines to her code to uncover what the machine does. We’ve made the world more complex, but we can break through the complexity in narrow contexts when necessary.

That’s not how a world built on AGI works. Initially, the machines may translate what they’re doing into concepts we understand. Even then, we should be skeptical. Human decision-making is messy, and how we explain our actions is often inconsistent with what’s happening inside our brains.

Eventually, we become the chimpanzees. We're unlikely to understand even if the machines explain their logic. It would be like ChatGPT telling you to eat two anchovies, hop on one foot for 30 seconds, and sing “Happy Birthday” to cure your headache. It may work, but good luck figuring out why.

With AGI, abstraction complexity is a byproduct of human progress. We may build a safer, more prosperous, and enriching society, but we’ll have little idea why anything works.

I won’t speculate on how humans will solve the abstraction complexity problem — that’s a recipe for an article that never ends. Instead, I want to conclude with an observation about happiness.

Touching Grass

I have little doubt AGI will turbocharge economic growth. Today, it takes nine months to create a human and about two decades to educate it. Costs vary widely, but most estimates for the U.S. are in the “several hundred thousand dollars” range. AGI will scale faster and cheaper than human labor by orders of magnitude.

Growth isn’t the problem. I’m more worried about happiness. Mental health data isn’t a perfect proxy for unhappiness, but it’s difficult to say things are going well when 23 percent of people exhibit signs of mental illness. Even worse, the number is 36 percent for young people.

Widespread evidence of mental illness

I (mostly) retired at the end of 2022. Since then, I’ve noticed something about myself. I’m happiest when I’m close to objective reality. Even a few levels of abstraction make me anxious and discombobulated.

That weekend in Austin, Texas, for the F1 race is a prime example. I’ve watched races online, read articles about the teams and drivers, and driven an F1 car in Gran Turismo 7.

Everything about the in-person experience was objectively worse. I saw less of the race. I didn’t meet any drivers or teams. Nobody in their right mind would let me near an F1 car.

Yet, that weekend was defined by visceral connections to objective reality. I heard the roar of the engines and smelled the exhaust fumes. I roasted in the sun during the day and huddled in dark bars at night. Best of all, I spent the entire weekend exploring reality with a close friend.

Our brains haven’t evolved as fast as the world around us. We’re hardwired to explore reality. Machines may do it better, and there’s no outrunning the compounding layers of abstraction. However, I suspect happiness depends on touching grass now and then.

AGI will do to daily life what Rotten Tomatoes did to the movies — solve a complexity problem by adding layers of abstraction. Don’t lose sight of what makes the movie of life worth watching.

Keep exploring reality. Better yet, do it with friends. You probably won’t discover anything that moves humanity forward, but you may find something far more elusive — happiness.